We have seen more machine learning in how Google ranks pages and images in search results. That may leave traditional ranking signals behind. It is worth looking at those older ranking signals because they may play a role in ranking. As Simran IT Services is writing about this new patent on ranking image results, we decided to include what we used to look at when ranking images. Images can rank in image search, and can help pages they are one rank higher, making a page more relevant for the query terms it ranks for.

Here are signals that we would include when trying to rank image search results:

Use meaningful images reflecting what the page is about – make them relevant to a query

Use an image file name relevant to what the image is about (I separate words in file names for images using hyphens, too)

Use alt text for an alt attribute to describes the image well, with text relevant to the query and avoid keyword stuffing

Use a caption that is helpful and relevant to what the query term the page is about

Use a unique title and associated text on the page relevant to what the page is about, and what the image shows

Use a properly sized image at a decent resolution that isn’t mistaken for a thumbnail

These signals help rank image search results and help that page rank as well. It doesn’t list the features that help images ranks, such as alt text, captions, or file names. It does refer to “features” that likely include those as well as other signals. These machine learning patents will likely become more common from Google.

Machine Learning Models to Rank Image Search Results

This machine learning model may use many different types of machine learning models.

Those models can be:

Deep machine learning (e.g., a neural network that includes many layers of non-linear operations.)

Other models (e.g., a generalized linear model, a random forest, a decision tree model, and so on.)

This machine learning model accurately generates relevance scores for image-landing page pairs in the index database.”

The patent tells us about the image search system, which includes a unique training engine.

The training engine trains the machine learning model using training data from image-landing page pairs already associated with ground truth or known values of the relevance score.

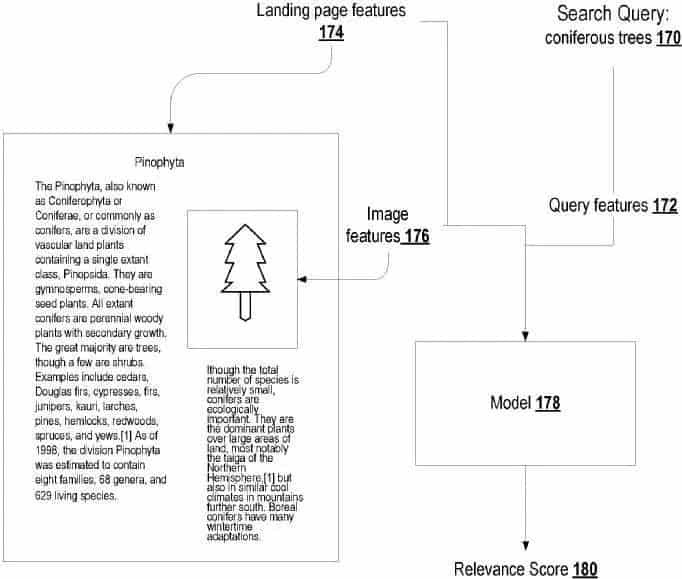

An example of the machine learning model generates a relevance score for an image search result from an image, a landing page, and query features. In this image, a searcher submits an image search query in the image search engine. The system generates an image query feature based on the user-submitted image search query.

That system learns landing page features for the landing page identified by the particular image search result as well as image features for the image identified by that image search result.

The image search system then provides the query features, the landing page features, and the image features as input to the machine learning model. Google might rank image search results based on various factors.

These may be separate signals from:

- Features of the image

- Features of the landing page

- Combining the separate signals following a fixed weighting scheme that is the same for each received search query

This patent describes how a search engine would rank image search results in this manner:

- Obtaining as many candidate image search results for the image search query

- Each candidate’s image search result identifies a respective image and a respective landing page for that particular image

- For each candidate image search results processing

- Features of the image search query

- Features of the particular image identified by the candidate image search result

- Features of the particular landing page identified by the candidate image search result using an image search result ranking machine learning model trained to generate a relevance score measuring the relevancy of the candidate image search result to the image search query

- Ranking for the candidate’s image search results is based on the relevant scores generated by the image search result ranking machine learning model

– Generating an image search results presentation that displays the candidate’s image search results according to the ranking

– Providing the image search results for presentation by a particular user device

Advantages of Using a Machine Learning Model to Rank Image Search Results

If Google can rank image search query pairs based on relevance scores using a machine learning model, it can improve the relevance of the image search results in response to the image search query.

This differs from conventional methods to rank resources because the machine learning model receives a single input that includes features of the image search query, landing page, and the image identified by a given image search result to predicts the relevance of the image search result to the received query.

This process allows the machine learning model to be more dynamic and give more weight to landing page features or image features in a query-specific manner, improving the quality of the image search results that are returned to the user.

By using a unique machine learning model, the image search engine result page does not apply the same fixed weighting scheme for landing page features and image features for each received query. Instead, it combines the landing page and image features in a query-dependent manner.

The patent also tells us that a trained machine learning model can easily and optimally adjust weights assigned to various features based on changes to the initial signal distribution or additional features.

In a conventional image search, we are told that significant engineering effort is required to adjust the weights of a traditional manually tuned model based on changes to the initial signal distribution.

But under this patented process, adjusting the weights of a trained machine learning model based on changes to the signal distribution is significantly easier, thus improving the ease of maintenance of the image search engine.

Also, if a new feature is added, the manually tuned functions adjust the function on the new feature independently on an objective (i.e., loss function, while holding existing feature functions constant.)

But, a trained machine learning model can automatically adjust feature weights if a new feature is added.

Instead, the machine learning model can include the new feature and rebalance all its existing weights appropriately to optimize for the final objective.

Thus, the accuracy, efficiency, and maintenance of the image search engine can be improved.

The Indexing Engine

The search engine may include an indexing engine and a ranking engine. The indexing engine indexes image-landing page pairs, and adds the indexed image-landing page pairs to an index database. That is, the index database includes data identifying images and, for each image, a corresponding landing page.

The index database also associates with the image-landing page pairs with the following:

Features of the image search query

Features of the images, i.e., features that characterize the images

Features of the landing pages, i.e., features that characterize the landing page

Optionally, the index database also associates the indexed image-landing page pairs in the collections of image-landing pairs with values of image search engine ranking signals for the indexed image-landing page pairs.

Each image search engine ranking result signal is used by the ranking engine in ranking the image-landing page pair in response to a received search engine query.

The ranking engine generates respective ranking scores for image-landing page pairs indexed in the index database based on the values of image search engine ranking signals for the image-landing page pair. The ranking score for a given image-landing page pair reflects the relevance of the image-landing page pair to the received search query, the quality of the given image-landing page pair, or both.

The image search engine can use a unique machine learning model to rank image-landing page pairs in response to received exact search queries.

The machine learning model is a machine learning model that is configured to receive an input that includes

(i) features of the image search query

(ii) features of an image and

(iii) features of the landing page of a particular image and generate a relevance score that measures the relevance of the candidate image search result to the image search query.

Once the machine learning model generates the relevance score for the image-landing page pair, the ranking engine can then use the relevance score to generate ranking scores for the image-landing page pair in a responsive way to receive a relevant search query.

Features That May Be Used from Images and Landing Pages to Rank Image Search Results Page

The first step is to receive the image search query result of a particular image.

Once this process is completed, the image search system may identify initial image-landing page pairs that satisfy the image search query.

It would do that from pairs that are indexed in a search engine index database from signals measuring the quality of the pairs, and the relevance of the pairs to the search query, or both.

For those pairs, the search system identifies:

Features of the image search query

Features of the image

Features of the landing page

Features Extracted From the Image

These features can include vectors that represent the content of the image. Vectors to represent the image may be derived by processing the image through an embedding neural network. Or those vectors may be generated through other image processing techniques for feature extraction. Examples of feature extraction techniques can include edge, corner, ridge, and blob detection. Feature vectors can include vectors generated using shape extraction techniques (e.g., thresholding, template matching, and so on.) Instead of or in addition to the feature vectors, when the machine learning model is a neural network the features can include the pixel data of the image.

Features Extracted From the Landing Page

These aren’t the kinds of features that I usually think about when optimizing images historically. These features can include:

The date the page was first crawled or updated.

Data characterizing the author of the landing page

The language of the landing page

Features of the domain that the landing page belong to

Keywords representing the content of the landing page

Features of links to the image and landing page such as the anchor text or source page for the concerned links

Features that describe the context of the image on the landing page

So on

Features Extracted From The Landing Page That Describes The Context of the Image in the Landing Page

The patent interestingly separated these features:

Data characterizing the location of the image within the landing page

The prominence of the image on the landing page

Textual descriptions of the image on the landing page

Etc.

More Details on the Context of the Image on the Landing Page

The patent points out some alternative ways that the location of the image within the Landing Page might be found:

Using pixel-based geometric location in horizontal and vertical dimensions

User-device based length (e.g., in inches) in horizontal and vertical dimensions

An HTML/XML DOM-based XPATH-like identifier

A CSS-based selector

Etc.

The prominence of the image on the landing page can be measured using the relative size of the image as displayed on a generic device and a specific user device. The textual descriptions of the image on the landing page can include alt-text labels for the image, text surrounding the image, and so on.

Features Extracted from the Image Search Query

The features from the image search query results can include:

Language of the search query

Some or all of the terms in the search query

The time that the search query was submitted

The location from which the search query was submitted

Data characterizing the user device from which the query was received

So on

How the Features from the Query, the Image, and the Landing Page Work Together

The features may be represented categorically or discretely

Additional relevant features can be created through pre-existing features (Relationships may be created between one or more features through a combination of addition, multiplication, or other mathematical operations.)

For each image-landing page, the system processes a feature using an image search result ranking machine learning model to generate a relevance score output for the concerned page.

The relevant score measures the relevancy of the candidate image search result to the image search query (i.e The relevance score of the candidate image search result measures the likelihood of the user submitting the search query result would click on or otherwise interact with the search result. A higher relevance score indicates the user submitting the search engine query page would find the candidate image search more relevant and click on it)

The relevance score of the candidate image search result can be a prediction of a score generated by a human rater to measure the quality of the result for the image search query.

So on

Adjusting Initial Ranking Scores

The system may adjust the initial ranking scores for the image search results based on the relevant

scores to:

Promote search results having higher relevance scores

Demote search results having lower relevance scores

Or both

Training a Unique Ranking Machine Learning Model to Rank Image Search Results

The system receives a set of training image search queries

For each unique training image search query, training image search results for the query are each associated with a ground truth relevance score.

A ground truth relevance score is the relevance score that should be generated for the image search result by the machine learning model (i.e., when the relevance scores measure a likelihood that a user would select a search result in response to a given search query, each ground truth relevance score can identify whether a user submitting the given search queries selected the image search result or a proportion of times that users submitting the given search query select the image search result.)

The patent provides another example of how ground-truth relevance scores might be generated:

When the relevance scores generated by the model are a prediction of a score assigned to an image search result by a human, the ground truth relevance scores are actual scores assigned to the search results by human raters.

For each of the unique training image search query, the system may generate features for each associated image-landing page pair.

For each of those pairs, the system may identify:

(i) features of the image search query

(ii) features of the image and

(iii) features of the landing page.

We are told that extracting, generating, and selecting features may take place before training or using the machine learning model. Examples of features are the ones I listed above related to the images, landing pages, and queries.

The ranking engine trains the machine learning model by processing for each image search query

Features of the image search query

Features of the concerned image identified by the candidate’s image search result

Features of the respective landing page identified by the candidate image search result and the respective ground truth relevance that measures the relevance of the candidate image search result to the image search query

The patent provides some specific implementation processes that might differ based upon the machine learning system used.

Takeaways to Rank Image Search Results

We have provided some information about different kinds of features Google May have used in the past in ranking Image search results. Under a machine learning approach, Google may be paying more attention to features from an image query, features from Images, and features from the landing page those images are found upon. The patent lists many of those features, and if you spend time comparing the older features with the ones under the machine learning model approach, you can see there is overlap, but the machine learning approach covers considerably more options.

Visit us at our website for the best Web Development services India and the best Digital Marketing services India.